- Published on

BA025. Finding the Optimal Boundary — The Math of Grid Search and Youden's J

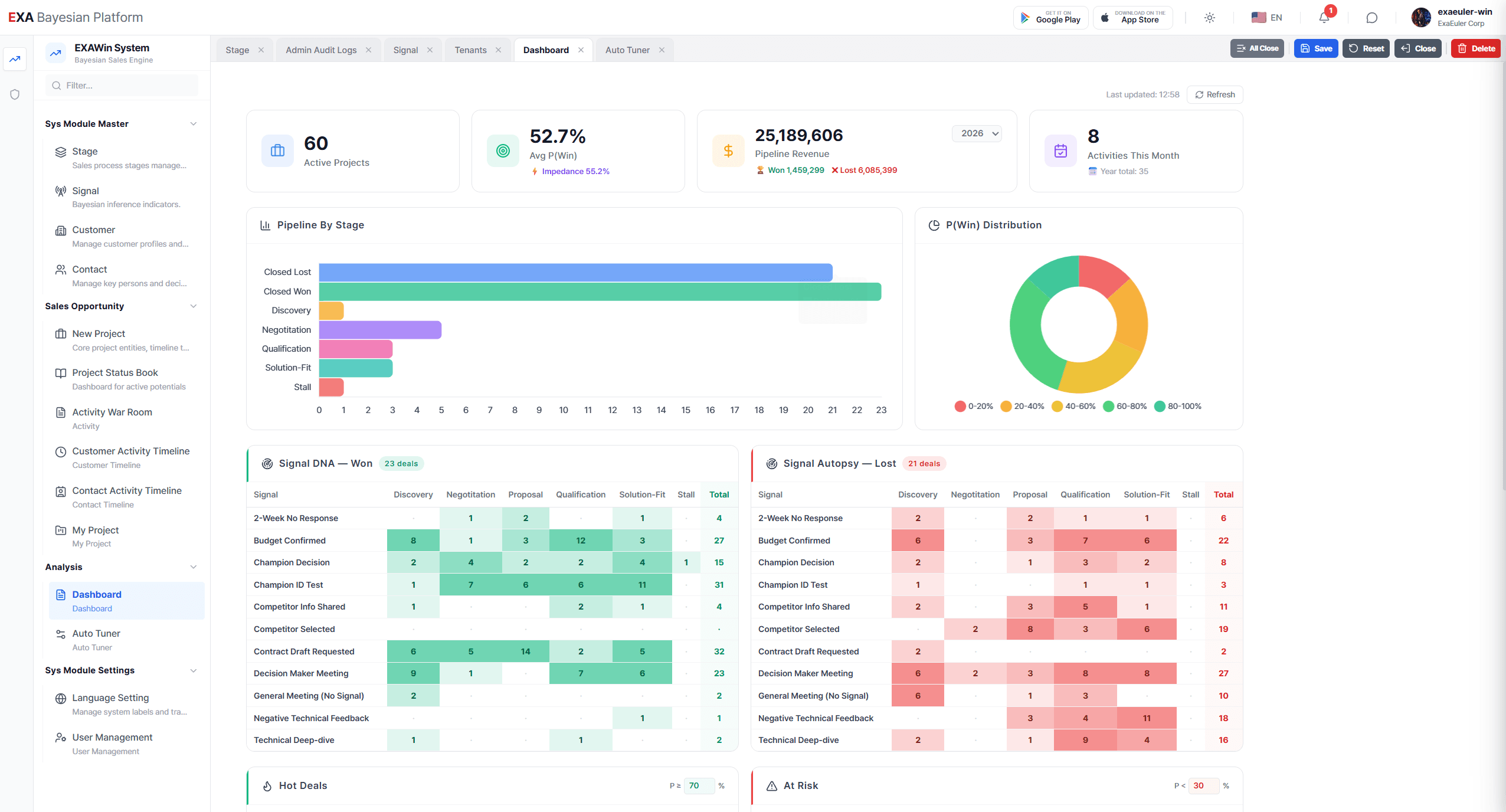

In BA024 (BA024. The Evolution of EXAWin Bayesian Engine: The Day Data Tuned Its Own Parameters), the VP of Sales witnessed Auto-Tuner scanning 3,240 grid points to find optimal parameters. During those 30 seconds as the grid transitioned from blue to red on screen, what mathematics was running inside the engine?

This post explains the mathematical principles behind Auto-Tuner's first pillar — Grid Search Optimization and Youden's J Index — with their business implications.

1. Defining the Problem: What Is a "Good Parameter"?

EXAWin's Bayesian engine depends on two core parameters.

T (Stage Weight): The magnitude of influence that signals occurring at each sales stage (Discovery, Qualification, Solution-Fit, Negotiation, Closing) have on the win probability. A higher T means signals at that stage — positive or negative — move the probability more dramatically.

k (Signal Sensitivity): The coefficient that converts signal strength (weak positive, strong negative, etc.) into Alpha/Beta update amounts. A high k makes the engine react sensitively to each signal; a low k makes it react dully.

The question is straightforward: How should T and k be set so the engine most accurately distinguishes between 'deals that will close' and 'deals that won't'?

To answer this, we first need to define what "accurately distinguish" means mathematically.

2. Reframing as a Classification Problem

At its core, the sales engine is a Binary Classifier.

Every deal ends in one of two outcomes — Won or Lost. The engine calculates a win probability P(Win) for each deal, and if this exceeds a certain Threshold, labels it "likely to win"; otherwise, "at risk."

Four scenarios arise:

| Actually Won | Actually Lost | |

|---|---|---|

| Engine: "Will win" | ✅ True Positive (TP) | ❌ False Positive (FP) |

| Engine: "Won't win" | ❌ False Negative (FN) | ✅ True Negative (TN) |

This table is called the Confusion Matrix — the starting point for evaluating any classifier's performance.

Business Implications

Each cell has very different consequences for a sales organization:

- TP (True Positive): Engine said "will win," and it did. → Operating correctly. Resources deployed wisely.

- FP (False Positive): Engine said "will win," but it failed. → The most dangerous error. The sales team wastes resources chasing false hope while missing other opportunities. This is exactly what happened with the "68% deal that failed" in BA024.

- FN (False Negative): Engine said "won't win," but it actually did. → Opportunity abandoned or under-resourced, potentially missing a larger win.

- TN (True Negative): Engine said "won't win," and it didn't. → Operating correctly. Unnecessary resource waste prevented.

The ideal engine maximizes TP and TN while minimizing FP and FN. But in reality, these exist in a trade-off relationship.

3. Sensitivity and Specificity: The Eternal Tug-of-War

Two metrics quantify this trade-off.

Sensitivity (Recall, True Positive Rate)

"Among deals that were actually won, the proportion the engine correctly identified as 'will win.'"

High Sensitivity means never missing an opportunity. It catches every actual win. But if you push Sensitivity alone to its limit, the engine ends up labeling every deal as "will win" — and FP (False Positives) explode.

Specificity (True Negative Rate)

"Among deals that actually failed, the proportion the engine correctly identified as 'won't win.'"

High Specificity means never giving false hope. It accurately filters out losing deals. But if you push Specificity alone, the engine becomes extremely conservative, labeling even actual wins as "won't win." FN (False Negatives) explode.

The Sales Dilemma

This tug-of-war maps precisely to a dilemma faced daily on the sales floor:

- Aggressive sales strategy (Sensitivity-first): "Pursue anything with even a sliver of potential." → Never miss an opportunity, but resources scatter and wasted effort grows.

- Conservative sales strategy (Specificity-first): "Focus only on sure things." → Efficient, but hidden opportunities slip away.

The best sales organizations find the optimal balance point between these two. And that is exactly what Youden's J does.

4. Youden's J Index: The Math of Optimal Balance

Youden's J Index was proposed in 1950 by epidemiologist William Youden to evaluate diagnostic test performance. Its math is remarkably concise:

Or equivalently:

Where FPR (False Positive Rate) = 1 - Specificity.

Intuitive Interpretation

- J = 0: Engine performs at coin-flip level. No classification ability.

- J = 1: Perfect classifier. Correctly identifies every win and filters every loss.

- The point where J is maximized: The balance point where Sensitivity and Specificity are simultaneously at their highest.

Why J? — The Pitfall of Accuracy

One might ask: "Why not just maximize Accuracy?"

Simple Accuracy has a fatal weakness: it is vulnerable to Class Imbalance.

For example, suppose 90 of 100 deals were won and 10 lost. If the engine unconditionally shouts "will win" — with zero analysis — Accuracy hits 90%. But what about Youden's J?

- Sensitivity = 90/90 = 1.0 (caught every win)

- Specificity = 0/10 = 0.0 (filtered zero losses)

- J = 1.0 + 0.0 - 1 = 0.0

The J Index gives this kind of 'incompetent optimism' a score of zero. Even at 90% accuracy, if J is 0, the engine has no value.

This is why Auto-Tuner uses Youden's J — not Accuracy — as its optimization target.

5. Grid Search: The Power of Exhaustive Scanning

Now we need to find the parameter combination (T, k) that maximizes Youden's J. Auto-Tuner's method of choice is Grid Search.

Algorithm

Grid Search's principle is straightforward: "Try every possible combination, and pick the best one."

- Define the parameter space: Set each T-value from 0.1 to 1.0 in increments of 0.05, and k-value from 0.5 to 2.5 in increments of 0.1.

- Generate the grid: 5 Stages × 19 T-value candidates each × 21 k-value candidates = thousands of Grid Points.

- Evaluate each grid point: For every grid point, feed in historical deal data (Won/Lost) and compute Youden's J — how well P(Win) calculated with those parameters matches the actual outcomes.

- Select the optimum: Return the parameters at the grid point with the highest J as the optimal candidate.

Mathematical Procedure (Evaluating One Grid Point)

Fix one grid point. Call it θ = (T₁, T₂, ..., T₅, k).

For N historical deals:

Step 1. Recalculate Alpha/Beta for all activities in each deal using θ's T-values and k-value:

Where impact(i) is the impact score of the i-th signal.

Step 2. Compute the final P(Win) from the recalculated Alpha/Beta:

Step 3. Compare P(Win) of all deals against actual outcomes (Won/Lost) and generate the Confusion Matrix at various thresholds (e.g., 0.3, 0.4, 0.5, 0.6, ...).

Step 4. Calculate Sensitivity and Specificity at each threshold, and compute J.

Step 5. Record the maximum achievable J for this grid point θ.

Repeat this process for all grid points, and select the one with the highest J as the optimal parameters.

6. ROC Curve and Optimal Threshold

During Grid Search, a ROC Curve (Receiver Operating Characteristic Curve) is drawn for each grid point.

What Is a ROC Curve?

The ROC curve plots (FPR, TPR) pairs on a coordinate plane as the threshold is continuously varied from 0 to 1.

- X-axis: False Positive Rate (1 - Specificity)

- Y-axis: True Positive Rate (Sensitivity)

Shape and Meaning

- Top-left corner (0, 1): The position of a perfect classifier. FPR=0, TPR=1.

- Diagonal (y=x): Random guessing. No classification ability.

- The closer the curve to the top-left corner, the better the classifier.

The Relationship Between Youden's J and ROC

The point where Youden's J is maximized corresponds to the point on the ROC curve that has the greatest vertical distance from the diagonal (y=x).

The threshold at this point becomes the optimal classification cutoff for that parameter combination.

Business Implications

The ROC curve is a highly intuitive tool for sales leaders:

"Setting the threshold at 50% captures 82% of winning deals but also mislabels 15% of losing deals as 'will win.' Raising it to 60% drops win capture to 71%, but false positives fall to 6%."

Visually displaying these trade-offs and providing a mathematical answer to "Where is the right boundary line for our organization?" is precisely the role of ROC + Youden's J.

7. Grid Search: Limitations and Complements

Grid Search is powerful, but it has two inherent limitations.

Limitation 1: Discrete Scanning

Grid Search evaluates only points on the grid. With T-values scanned in 0.05 increments, if the true optimum is 0.223, it only evaluates 0.20 and 0.25 — missing everything in between. Increasing resolution improves precision but causes computation to grow exponentially.

Auto-Tuner's Solution: Grid Search serves as a first-pass filter. After locating the "approximate optimal region," the second phase — MCMC — performs fine-grained exploration in continuous space.

Limitation 2: Point Estimate

Grid Search delivers a single answer: "the optimum with J=0.74." But nearby parameters might yield similarly high J values. If T(Discovery)=0.22 gives J=0.74 and 0.21 gives J=0.73, is the difference statistically meaningful?

Auto-Tuner's Solution: MCMC explores the entire probability distribution around the optimum, providing an Interval Estimate: "Anywhere between 0.19 and 0.25 is safe with 95% probability."

This is why Auto-Tuner doesn't stop at Grid Search — it advances to MCMC Ensemble Sampling.

8. Business Scenario: Before/After

Let's return to the VP of Sales scenario from BA024.

Before (Manual Configuration)

| Parameter | Value | Rationale |

|---|---|---|

| T(Discovery) | 0.30 | "If the initial meeting goes well, half the battle is won" → Overestimation |

| T(Solution-Fit) | 0.80 | "Tech validation is important, I suppose" → Undervaluation |

| k | 1.50 | "Every single signal matters" → Over-reactivity |

Youden's J with these settings = 0.52. Correctly classifying 52 out of 100 deals.

After (Auto-Tuner Grid Search)

| Parameter | Value | Data-driven Rationale |

|---|---|---|

| T(Discovery) | 0.22 | Initial reactions contribute less to actual wins |

| T(Solution-Fit) | 0.87 | Technical fit is the decisive win factor |

| k | 1.34 | Excessive sensitivity suppressed, noise reduced |

Youden's J with these settings = 0.74. A 42% improvement.

The Real Difference

Of 106 historical deals, 11 deals that were FP (False Positive) under the Before settings — engine said "will win" but they failed — 8 were correctly reclassified as "at risk" under the After settings.

What this means: The sales resources invested in those 8 deals (averaging 3 months × 2 people per deal) would not have been wasted. Those resources would have been redeployed to deals with genuinely higher win potential.

The difference a single decimal in a parameter makes (0.30 → 0.22) translates to thousands of hours of sales effort and hundreds of thousands of dollars in opportunity cost annually.Summary

Grid Search and Youden's J form the first pillar of Auto-Tuner. To summarize what this stage does in one sentence:

"Try every possible parameter combination, and mathematically find the optimal balance point where 'winning deals are never missed AND losing deals are never given false hope.'"

But Grid Search only provides a point estimate: "this hill is the tallest." In the next post, we explore how MCMC Ensemble Sampling generates the interval estimate "and 256 explorers have reached consensus that this height is correct," and how 5-Fold Cross-Validation tests "will these results hold in the future."

📡 Next Episode

BA026. Consensus of the Particles — The Math of MCMC Ensembles and Cross-ValidationWhy exactly 256 walkers? What does R̂ convergence diagnostics guarantee? How does HDI 95% differ from a standard confidence interval? And why is 5-Fold Cross-Validation the ultimate method for catching "students who memorized the exam answers"? Dissecting Auto-Tuner's second and third pillars.

Bayesian EXAWin-Rate Forecaster

Precisely predict sales success by real-time Bayesian updates of subtle signals from every negotiation. With EXAWin, sales evolves from intuition into the ultimate data science.

![[BA03. On-Time Risk: Appendix 1] Anatomy of the EXA Bayesian Engine: Mixture Distributions and Observational Deviation](/_next/image?url=%2Fstatic%2Fimages%2FBA03_1.png&w=3840&q=75)